Something strange is starting to show up in analytics.

Traffic is flat or even down. Yet conversions from certain sources are climbing. And content that appears to be “underperforming” keeps influencing decisions anyway.

The disconnect isn’t a measurement bug. It’s a shift in how the web is used.

Increasingly, people don’t start with a search result or a homepage. They start with an AI assistant or a large language model. The assistant reads, compares, summarizes, and decides what information is worth passing along.

Sometimes the AI sends a click; sometimes it doesn’t. But either way, it shapes what the user believes about your company before they ever reach your website. That creates a new problem for analytics.

Traditional KPIs are excellent at measuring outcomes during and after a visit: sessions, bounce rates, conversions. And most traffic channels like organic search and paid media provide in depth data about the impressions and clicks they send your way. In contrast, LLMs provide no data about impressions or recommendations.

The central challenge for site owners and publishers in an AI-mediated web is to find ways to understand what happens in this black box and quantify how it impacts your bottom line.

This post outlines a practical set of KPIs that help you do just that. Starting with signals teams can measure today—and moving toward more forward-looking metrics—we’ll explore what metrics you need to start tracking to understand AI’s role in the discoverability of your business.

AI Referral Traffic

What it measures

AI Referral Traffic tracks the sessions where visitors land on your site after clicking links in AI assistant platforms — such as ChatGPT, Copilot, Gemini, Claude, or Perplexity — rather than from traditional search, social, or direct visits.

Why it matters

As AI assistants become a primary gateway to information, they are reshaping how discovery works online. Traditional search clicks are predicted to decline as generative AI tools become the primary first step of answering queries, particularly informational ones.

In this environment, simply measuring pageviews isn’t enough. You need to know when AI platforms are sending real human visitors to your site. Identifying AI Referral Traffic makes AI-driven discovery visible and actionable, helping you understand:

- Whether your content breaks through into AI-suggested results

- How often AI-driven sessions land on your site

- How these visitors behave compared to other channels

This signal is the first real indication that AI is affecting your funnel, even if raw volume is still small.

How to track it

Because AI platforms add UTMs to their referral links, tracking this metric is very easy. Many analytics tools are already able to track traffic from this channel in their existing channel reports.

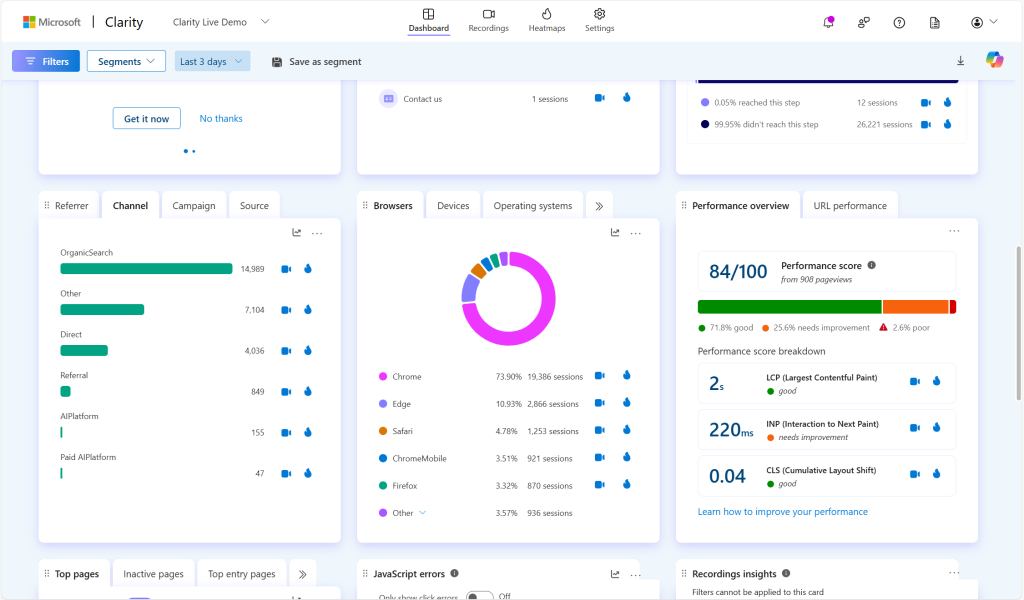

Clarity provides an added level of detail by breaking AI traffic into two dedicated channel groups: AI Platform (organic referrals) and Paid AI Platform (ads shown within AI experiences). This is becoming particularly relevant as rumors circle about ChatGPT introducing ads to their platform.

AI Referral Conversions

What it measures

AI Referral Conversions track how often visitors who arrive from AI assistants complete a meaningful action on your site, such as signing up, requesting a demo, subscribing, or making a purchase.

While AI Referral Traffic answers “Is AI sending us visitors?”, this metric answers the more important question: “Do those visitors actually matter to the business?”

Why it matters

In an AI-mediated web, volume alone is a misleading signal. AI assistants often pre-qualify users before they ever reach your site, summarizing options, narrowing choices, and guiding users toward specific next steps.

As a result, AI-referred visitors tend to arrive:

- With clearer intent

- Further along in their decision-making process

- More ready to act

Measuring AI Referral Conversions ties this emerging channel directly to leads, revenue, and outcomes, not just curiosity clicks. It’s the KPI that turns AI from an interesting traffic source into a credible growth lever.

This is also where skepticism fades. Even if AI traffic represents a small percentage of total sessions, a higher conversion rate reframes its importance. A channel that sends fewer visitors but converts significantly better can outperform higher-volume sources where it matters most.

How to track or approximate it

AI Referral Conversions are fully trackable today once AI referral traffic is segmented.

In practice, this means:

- Filtering sessions by AI Platform traffic

- Comparing conversion rates against search, social, and direct channels

- Evaluating which goals AI-referred visitors complete most often

Bot and Crawler Activity

Bot and Crawler Activity tracks how often indexing bots, AI crawlers, and LLMs access your content, including both helpful bots (e.g., those that power Copilot or ChatGPT) and less useful scraping agents. Rather than focusing on human sessions, this metric tells you how often AI systems see your content before any referral or conversion happens.

Monitoring this type of website activity provides top of funnel data that helps you understand the why behind your AI referral traffic and conversions.

But understanding bot and crawler activity gives you early insight further up the funnel.

Say, for example, your AI referral traffic is low and not increasing. Analyzing bot and crawler activity can help you identify and address the underlying problem.

If your crawl activity is high but driving low referrals: AI systems are reading your content but not choosing to send traffic. This pattern often points to visibility without relevance or without clarity. The AI sees you but doesn’t “prefer” your content when synthesizing answers. This might signal issues with page messaging, structure, or semantic clarity.

If your crawl activity is low: Your site isn’t being seen by the systems that could recommend it. The solution then focuses on discoverability: improving internal linking, schema markup, technical structure, or even external signals that prompt AI crawlers to visit your pages in the first place.

You could even take this analysis a step further by exploring the relationship between crawl activity and referral traffic for each LLM.

This process mirrors how SEO teams use search impression metrics to troubleshoot visibility before worrying about clicks or conversions. Likewise, bot and crawler activity helps you diagnose AI visibility before you ever see a referral or conversion.

How to track it

Bot and crawler activity has traditionally been visible only through infrastructure‑level tools like server logs, CDNs, and bot management platforms, which capture every request and can distinguish between helpful AI agents and generic bots.

Now, behavior analytics tools are beginning to integrate these signals directly into their dashboards, making it easier for teams to see not just raw crawl volume but how AI interactions correlate with human behavior and conversions — bridging the gap between infrastructure data and actionable site insights.

Clarity now surfaces bot and AI crawler signals directly in your analytics so you can differentiate:

- AI agents (e.g., those associated with known LLM indexing or assistant platforms)

- Traditional bots (search engine crawlers, scrapers, generic automation)

- Human visitors

This allows you to:

- Measure overall bot activity trends over time

- Segment AI bot hits per page to understand where AI systems are spending time

- Compare bot activity to referral traffic trends to see where ingestion stops and recommendation begins

- Identify pages with high crawl rates but low referrals — a classic diagnostic pattern

AI Citations

What it measures

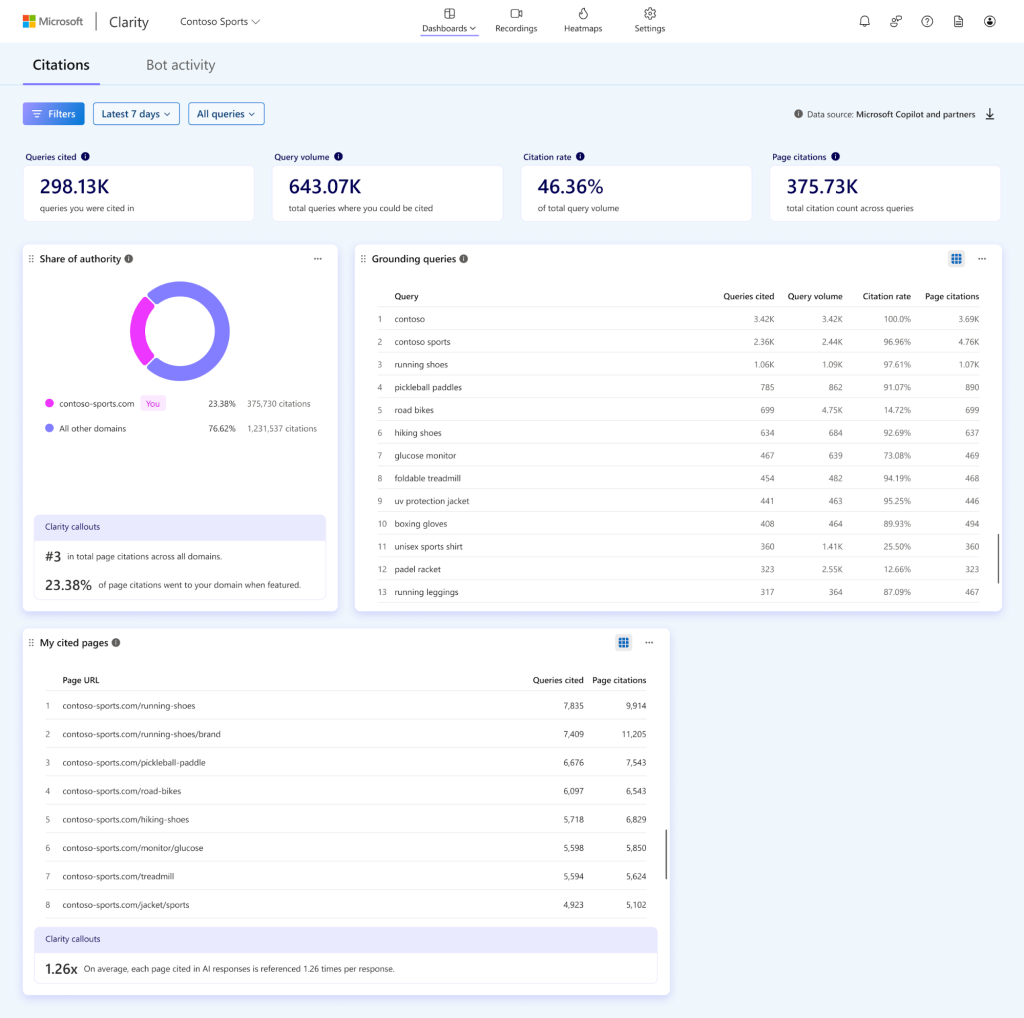

AI Citations track how often your content is used, referenced, or grounded in AI-generated answers, even when the user never clicks through to your site. Unlike AI Referral Traffic, which captures human sessions, AI Citations reveal your influence upstream, showing when AI systems rely on your content to construct responses, summaries, or recommendations.

Why it matters

Zero-click AI experiences are changing the definition of visibility. Your content may shape how questions are answered without seeing that reflected in traffic reports or even referral clicks. AI Citations surface these hidden interactions, allowing publishers and brands to:

- See which content contributes meaningfully to AI responses

- Identify gaps where your work informs AI but is uncredited

- Detect topics where competitors gain more recognition

- Prioritize updates that improve clarity, structure, and authority

How to track it

Citations by AI models is still an emerging measurement surface, and there is no universal, cross-platform standard for tracking them across the web.

Many tools approximate AI citations by sampling prompts, generating AI responses, and scanning outputs for brand mentions or URLs. These approaches can provide directional insight, but they rely on simulated queries and inferred visibility rather than observed grounding activity inside AI systems.

A more infrastructure-based approach connects citation reporting to the retrieval and grounding layer that powers AI-generated answers. For example, Clarity’s new AI Citations feature surfaces aggregated grounding and citation activity from supported AI experiences. Rather than scraping outputs, the dashboard reflects how your content participates in Microsoft’s grounding infrastructure — the retrieval layer that connects generative models to authoritative web content.

AI Recommendations

Status: North-Star / Forward-Looking

What it measures

AI Recommendation is a qualitative measurement of how AI systems suggest your product, service, or brand in response to commercial or decision-oriented prompts.

This is different from AI Citations. A citation may credit your content as a source. A recommendation, however, signals something deeper: the AI is positioning your offering as a solution.

Why it matters

As informational traffic increasingly shifts to zero-click AI answers, the real strategic value moves downstream, from being referenced to being recommended.

What will matter most is whether AI systems:

- Suggest your product in a comparison

- Include your service in a shortlist

- Mention your brand in a “best tools for…” answer

- Recommend your solution in a buying scenario

That is the new battleground.

If AI becomes the interface between users and the web, then influencing its recommendations becomes as important as ranking #1 in search once was. AI Recommendations represent a shift from measuring traffic to measuring persuasion.

How to approximate it today

There are currently no standardized, widely available analytics tools that systematically track AI recommendations at scale. Unlike crawl data or citations, recommendation visibility is harder to surface directly.

However, teams can approximate it by:

- Mapping key buying scenarios and transactional prompts (e.g., “best CRM for small businesses,” “tools like X but cheaper”).

- Testing comparison and decision-stage queries across major AI platforms.

- Tracking whether your brand appears, how it’s described, and where competitors are positioned.

- Monitoring changes over time as content, positioning, and authority evolve.

AI recommendations occupy the bleeding edge of analytics in the AI-mediated web. With careful qualitative research, it’s possible to understand how AI influences commercial intent and even scale those insights.

Turning that understanding into quantifiable, actionable metrics represents a new horizon for measuring impact, where influence happens before a click, a view, or a session has ever been logged.